ISO 26262 - Dependent Failure Analysis (DFA):

Dependent failure analysis aims at identifying

failures that may hamper the required independence or freedom from interference

between given elements (hardware/ software/ firmware) which may ultimately lead

to violation of safety requirement or safety goal.

Hence Dependent Failure Analysis consists of following

2 parts.

- Validate Freedom from Interference (FFI) between elements.

- Validate Independence between elements

Difference between Common Cause Failures and Cascading Failures:

Cascading Failures:

- Failure of an element of an item results in failure of another element or element of the same to fail.

- Cascading failures are dependent failure that are not common cause failures.

Common Cause Failures:

- Failure of 2 or more elements

of an item resulting from single specific event or root cause.

- Common cause failures are dependent

failure that are not cascading failures.

Dependent failure types:

Dependent Failures can arise from systematic failures

and random hardware failures.

How to identify Dependent Failure Analysis?

Dependent Failures can be identified from Safety

Analysis.

Deductive analyses:

- Examination of cut sets or repeated identical events of an FTA can indicate potential for dependent failures.

Inductive analyses:

- Similar parts or components with similar failure modes that appear several times in an FMEA can give additional information about the potential for dependent failures.

DFA Part 1: Freedom from Interference (FFI):

Analysis of interactions between software elements:

- The FFI should be analyzed between software elements (determined during software architecture design).

- Since the FFI is based upon analysis of only cascading failure, hence the data exchange between software elements should be analyzed.

- The ASIL level of software elements (source and destination elements) between which data exchange takes should be analyzed.

- In case the data exchange occurs between originating from lower level ASIL element to higher level ASIL element, then such interactions should be marked for analysis.

- These data exchanges should be analyzed for freedom from independence.

- In case plausibility is proved then mechanisms to prevent, detect and mitigate relevant faults are assessed during analysis.

Analyze the interaction between software elements for following possible failures.

Timing and Execution:

- blocking of execution.

- deadlocks – several processes blocking mutually by waiting for events that can be triggered by themselves.

- Livelocks – several processes keeping each other in infinite loop.

- incorrect allocation of execution time.

- incorrect synchronization between software elements.

Memory:

- corruption of content.

- read or write access to memory allocated to another software element.

Exchange of Information:

- repetition of information.

- loss of information.

- delay of information.

- insertion of information.

- masquerade or incorrect addressing of information.

- incorrect sequence of information.

- corruption of information.

- asymmetric information sent from a sender to multiple receivers.

- information from a sender received by only a subset of the receivers.

- blocking access to a communication channel.

Table 1:

Analysis of interaction between software elements for possible failures.

Table 2:

Analysis of interaction between software elements for possible failures.

DFA Part 2: Independence

Identification of couples:

Based upon above factors architectural units can be identified to form couples to prove independence amongst them.

The couples can be identified based upon following factors.

- Similar and dissimilar redundant elements

- Different functions implemented with identical software or hardware elements

- Functions and their respective safety mechanisms

- Partitions of functions or software elements

- Physical distance between hardware elements, with or without barrier

- Common external resources

The above criteria are described in details.

Similar and dissimilar redundant elements

In this example, 2 redundant software functionalities are implemented using different algorithms to provide an output (SO1 - which is Safety related).

SWF1: Multiplication using actual multiplication operatorSWF2: Multiplication using repeated addition operator

So, to determine the integrity of this output (SO1), both SWF1 and SWF2 needs to be identified as a couple. These can form a couple since they are used to provide a single output, which may fail due to following reasons.

SWF1 block failure (like SWF1 function not completely called due to interrupt function call, memory corruption of the code flash area where SWF1 or any of its variable is stored etc.). Similar in case of SWF2.

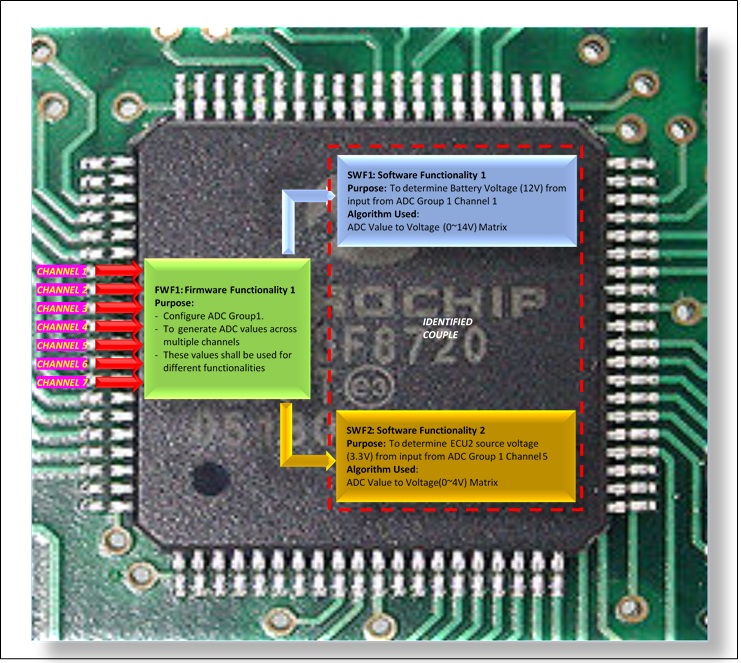

Different functions implemented with identical software or hardware elements

Both the software functionalities are implemented using a common/identical firmware element i.e. ADC firmware. Hence both these software elements can be considered as a couple.

In this example, the Power IC determines the voltage and current flowing through the Line and Neutral. Then the IC sends the values through SPI line to the Main micro controller for processing.

In this example, 2 different software functionalities (SWF2, SWF3) are using SPI driver firmware functionality (FWF1) to get the raw voltage and current values.

Both software functionalities (SWF2, SWF3) are then using the square functionality (SWF1) to determine the RMS voltage and current.

Since both SWF1 and SWF2 are different functions implemented through same software element (FWF1) hence both can be considered as a couple.

Functions and their respective safety mechanisms

In this example, the software functionality (SWF1) controls the Relay control IC (1,2) for live and neutral. The software functionality (SWF2) is used to detect the feedback from the voltage/current monitoring IC that monitors the flow of current. Hence once SWF1 controls the relay to stop current flow. SWF2 acts a safety mechanism for SWF1.

So, both SWF1 and SWF2 can be identified as a couple for analysis to check if there is any dependency.

Partitions of functions or software elements

In this example, SWF1 is Non-Safety related functionality that is located in block 1 of flash memory. SWF2 is Safety related functionality that is located in block 2 of flash memory. Both share data through a shared memory section accessible to both blocks. In case of modification of QM functionality will not affect ASIL level implementation. Such functionalities can be treated as a couple for analysis. Factors such as any impact while reading data from common memory should be analyzed for these couple.

Physical distance between hardware elements, with or without barrier

1. Power Failure to both Relay Control IC or Main Microcontroller

2. Relay Control IC Failure

3. Main Microcontroller IC failure (or Main Microcontroller is not able to communicate with Relay Control IC)

SO here in case of a hypothetical scenario when the main microcontroller gets fried due to a very high EMI/EMC radiation and the relays are not under control of the Main Microcontroller, the Relays should be in off state, so that the switches are closed. Hence here both HWEL1 and HWEL2 should be considered as a couple for analysis.

Common external resources

Table 3: Couple identifications

Dependent failures should be identified for the identified couples.

These potential failures should be identified for their plausibility of violation of independence.

Table 4: Failure type identification

Table 5: Detailed Analysis

Disadvantages of RADAR:

Disadvantages of RADAR:

Advantages of LIDAR:

Advantages of LIDAR:

Level of Autonomous - 5 Levels and Role of LIDARs

Level of Autonomous - 5 Levels and Role of LIDARs

LIDAR Manufacturing: Automotive TIER1 and Sensor Manufacturers

LIDAR Manufacturing: Automotive TIER1 and Sensor Manufacturers